A New AI Safety Frontier: Cross-Lab Testing to Unite the Industry

- OpenAI's Ilya Sutskever advocates cross-lab testing to strengthen AI safety amid rapid technological advancements and industry-wide risks. - Anthropic's browser-based Claude pilot highlights security challenges like prompt injection attacks, prompting enhanced mitigation strategies. - A study reveals poor compliance with voluntary safety commitments by major AI firms, including Apple, questioning self-regulation effectiveness. - Cloud Security Alliance's AI Safety Initiative offers frameworks and RiskRub

OpenAI co-founder and board member Ilya Sutskever has called for the implementation of cross-lab testing as a critical measure to ensure the safety of artificial intelligence (AI) systems. His statement comes amid growing concerns over the risks associated with AI advancements, with industry leaders emphasizing the need for collaborative, standardized safety protocols. Sutskever's call for cross-lab testing aligns with broader efforts to strengthen AI safety and mitigate potential harms as the technology continues to evolve rapidly [1].

The need for such collaborative approaches is underscored by recent developments in AI deployment and regulation. For instance, Anthropic, a major player in the AI sector, has introduced a pilot program for its AI assistant, Claude, designed to operate directly in users' browsers. This initiative, which aims to enhance the utility of AI by integrating it into core digital workflows, has also highlighted the significant safety and security challenges associated with browser-based AI agents. Prompt injection attacks—where malicious actors manipulate AI behavior by embedding hidden instructions—have emerged as a key risk, prompting Anthropic to implement robust mitigation strategies such as site-level permissions, action confirmations, and advanced classifiers to detect suspicious patterns [2].

Such risks are not confined to individual companies. A recent study from researchers at Brown, Harvard, and Stanford found that many AI companies have not fully upheld their voluntary safety commitments, particularly in the aftermath of the Biden administration’s 2023 AI safety pledges. Apple , for example, performed poorly in the evaluation, with evidence of compliance for only one out of every eight commitments. The study highlights the limitations of self-regulation in a rapidly evolving industry and raises questions about the effectiveness of voluntary measures in ensuring accountability and safety [5].

In response to these challenges, the Cloud Security Alliance (CSA) launched its AI Safety Initiative in late 2023, bringing together industry leaders, government agencies, and academic institutions to develop practical tools and frameworks for AI risk management. The initiative provides organizations with AI readiness checklists, governance frameworks, and security guidelines, with the goal of aligning technological progress with regulatory expectations. Notably, the CSA has also introduced RiskRubric.ai, a scoring system that evaluates the safety, transparency, and reliability of large language models (LLMs), offering enterprises a data-driven approach to AI adoption [4].

Collaborative efforts to enhance AI safety are also being supported by a growing ecosystem of funders and grant programs. Organizations such as the Long-Term Future Fund, the Survival and Flourishing Fund, and the AI Safety Fund are providing financial support to researchers, entrepreneurs, and institutions working on AI risk mitigation. These initiatives aim to address long-term existential risks while also promoting responsible innovation. Additionally, venture capital firms like Juniper Ventures and Mythos Ventures are investing in startups developing tools to improve AI security, compliance, and governance [6].

The call for cross-lab testing, as advocated by Sutskever, represents a pivotal step toward addressing these systemic challenges. By enabling shared standards and transparent evaluation across AI development labs, the industry can foster greater trust and accountability. This approach is particularly important as AI systems grow more complex and capable, necessitating a unified front to evaluate potential risks before deployment. OpenAI, Anthropic, and other key stakeholders have an opportunity—and a responsibility—to lead this transition by embracing collaborative safety protocols and setting a precedent for responsible AI innovation [1].

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

Bitcoin Faces Intensifying Sell-Off as ETF Outflows and Leverage Unwinds Pressure Markets

Solana ETF Hit 18-Day Inflow Streak

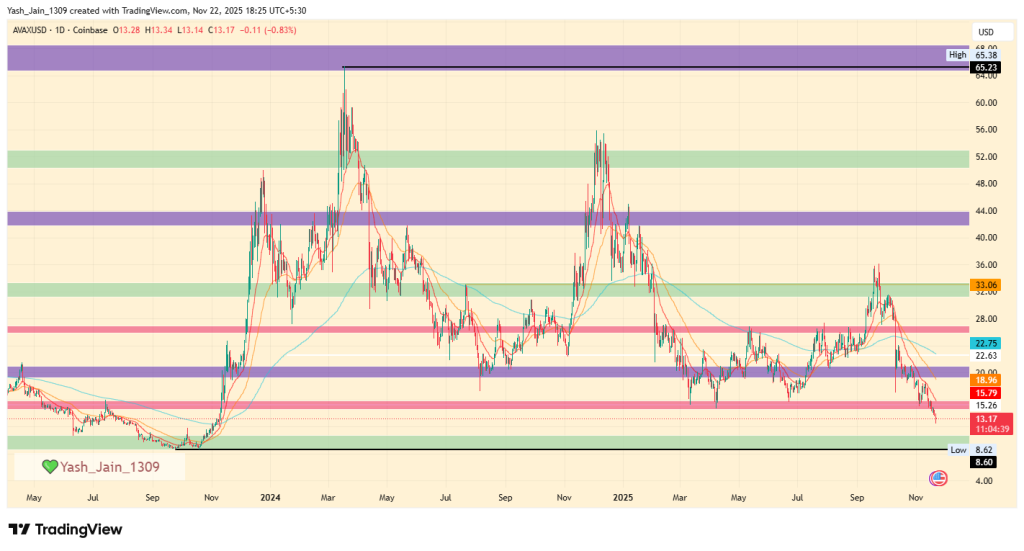

Avalanche Price Prediction 2025, 2026 – 2030: Will AVAX Price Hit $100?

PEPE Price Prediction 2025, 2026 – 2030: Can Pepe Memecoin Reach 1 Cent?